Other useful HTML elements

In this post, I'm going to introduce some HTML elements that are still useful in the modern web development, even if they aren't used as often as div or span.

TLDR: These HTML elements are useful for accessibility and search engine optimization

You can build most webpages using only div and span elements, along with the structural elements html, head, and body. However, it's important to know about other elements if you want your page to rank highly in Google search results, or you want your page to be accessible to people with disabilities.

Headings and titles

Heading elements are used to show titles or subtitles. They are important to your visitors because they make text more readable. They are important to Google because Google uses them to understand the content of your pages.

Heading elements are h1, h2, h3, h4, and h5. The h1 element is the top level title of the page, and should only occur once on a page, otherwise Google Search Console will show a warning for that page, and you won't rank as highly in web searches.

Here's an example of an h1 element.

<h1>I'm a page title that can be seen by the visitor</h1>

It's important to note that there IS an actual title element in HTML. When the title element appears in the header, it is used to set the text that is shown in the tab of your web browser.

<title>This is the text at the top of the tab</title>

Paragraphs in HTML

Before CSS was invented and standardized, there were lots of HTML elements used to format text. You can still use some of these, but it is widely discouraged. The "b" element bolds the enclosed text. The "i" element applies italics to the enclosed text. The "br" element adds a newline. You should never use any of those elements, but there is one element that is still quite useful.

The "p" tag is used to enclose a paragraph of text. It is useful as something separate from a div because it includes useful browser default styles, and because it serves as a good way to style the body text for your page.

<p>I'm a paragraph with some nice browser default styles.</p>

Browser defaults are a complex and controversial topic. Each browser starts with its own set of default styles so that a basic HTML page will be readable. There are many modern packages that override or eliminate these styles so that they don't interfere with a site's custom styles. I don't have a strong opinion on browser defaults in either direction.

Lists in HTML

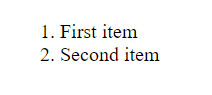

Lists are another bit of semantic HTML that are still widely used. There are two types of lists in HTML, ordered lists and unordered lists. These can be represented by the "ol" and "ul" elements respectively. List items can be represented by the "li" element.

So what's the difference between the two list types? Ordered lists are numbered, while unordered lists are bulleted.

<ol>

<li>First item</li>

<li>Second item</li>

</ol>

If you drop that into an HTML file then you will see something like the following.

I encourage you to replace the "ol" elements with "ul" and see what happens.

Nav, main, and footer elements in HTML

Finally, I want to recommend using the elements nav, main, and footer. These elements are used to encapsulate the navigation portion of the page, the main content of the page, and the footer of the page. These elements are absolutely VITAL for accessibility, as they tell screen readers about which part of the page they are reading. You need to remember accessibility for two reasons. It is important to allow as many people as possible to access your site, and Google will penalize you if you don't.

They are used just like divs.

<nav>

<ul>

<li>Menu item 1</li>

<li>Menu item 2</li>

<li>etc</li>

</ul>

</nav>

Introduction to CSS

In this post, I'm going to introduce CSS, and demonstrate how it can be used to apply styles to a web page.

TLDR: CSS defines the look and feel of a web page

After building the structure of a page using HTML, you can add styles to HTML elements using CSS. If HTML is a car's frame, then CSS is the body shape, the paint job, and the rims.

What is CSS?

CSS stands for Cascading Style Sheets. Programmers and web designers use CSS to describe the colors, fonts, and positions of HTML elements. Web browsers interpret the CSS on a page to show those styles to viewers.

CSS can be added to an HTML document in multiple ways. It can be added directly to HTML elements using inline styles, it can be added to the head section using style elements, or it can be included from another file.

A simple inline css example

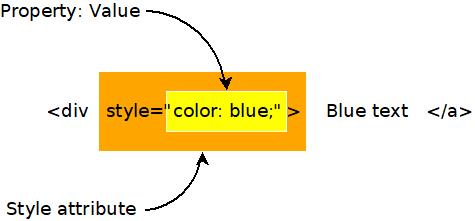

Here's an example of a very simple use of inline css using the style attribute on an element. All it does is set the font color to blue.

<div style="color: blue;">This text should be blue.</div>

To try this in your own html file, just type that code within the body section of the page. If you did it right, then the text should appear blue.

There are three important parts of this inline css. The first is the style attribute on the div element. Use the style attribute to apply inline styles. The second is the word "color", which specifies which property of the element that should be styled. The third important bit is the word "blue", which specifies the color. There are many ways to specify colors in CSS, and using names is the simplest.

I'm introducing inline styles here because they are useful for debugging, however, inline styles are impractical for a number of reasons, so we're going to look at a few other ways of incorporating CSS into an HTML document.

A simple CSS style element example

CSS styles can also be included in a document using the HTML style element. Usually such styles are placed in the head section of the document.

Using the HTML style element like this is generally discouraged, however, because the styles are not reusable in other documents. That's why styles are usually stored in separate files that can be included in any HTML document.

It's good to know about style elements, however, because they are sometimes used in emails. When creating a beautiful email, you don't have access to other files, so style elements are sometimes the best option.

<style>

span {

background-color: red;

}

</style>

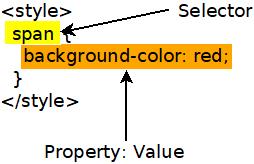

This CSS rule sets the background color to red for all span elements on a page. Here's how it might look in a full HTML document.

<!DOCTYPE html>

<html>

<head>

<title>Divs and spans</title>

<style>

span {

background-color: red;

}

</style>

</head>

<body>

<div>

This is a div and <span>this is a span</span>.

</div>

<div>

Notice that <span>all span elements on the page receive the style indicated in the rule above</span>. This is due to how CSS selectors work.

</div>

</body>

</html>

Here's a graphic showing how that style breaks down into parts. The selector is used to select which elements will recieve the style. The property and value describe the style to apply, just like when using inline styles.

What are selectors?

The problem with inline CSS is that it clutters up the code for your webpage, and it's not reusable. A single style declaration can be twenty or more lines long. That's a lot to insert into an HTML element, and adding it makes the code difficult to read. Also, inline styles only apply to the element they are on. If you want to use an inline style twice, then you have to duplicate it, which is a big no-no in programming. Fortunately, there are other ways to write CSS.

CSS can be included in a "style" HTML element. CSS written in the style element uses syntax called "selectors" to apply styles to specific elements on a page.

For example, say you wanted all span elements on your page to have a larger font. You might include something like the following in the head section of your HTML document.

<style>

span {

font-size: 2em;

}

</style>

That selector selects all elements with a given tag name. You could use "div" to style all divs, or "h1" to style all h1 elements. But what if you want to be more specific what if you only want to style some elements of a given type?

If you want to be more specific, then you can add class or id attributes to your html, then use those attributes in your CSS selectors.

Here's an example that shows a class attribute on a div and a selector that uses the class value. Notice that the class name is preceded by a period in the selector.

<style>

div.blueText {

color: blue;

}

</style>

<div class="blueText">This text should be blue</div>

Here's an example that shows how to use the id attribute and selector. Notice that the hashtag symbol precedes the id in the css selector

<style>

#blueText {

color: blue;

}

</style>

<div id="blueText">This text should be blue</div>

Usually class is used when you want to have many things that share the same style. The id attribute is used when you are styling something that is unique on the page.

Including CSS in a separate file

Inline styles and style elements are not the most common way of including styles in a web page. Usually styles are imported from a stylesheet file that contains CSS and ends with the .css extension.

Type the following code into a file named css_example.css.

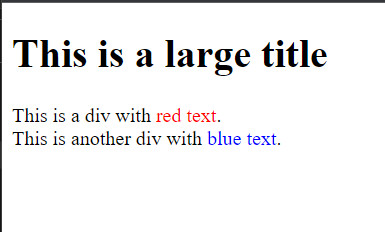

span.blue {

color: blue;

}

span.red {

color: red;

}

#title {

font-size: 2rem;

}

Now type the following code into a file named css_example.html.

<!DOCTYPE html>

<html>

<head>

<title>CSS Example</title>

<link rel="stylesheet" href="css_example.css">

</head>

<body>

<h1 id="title">This is a large title</h1>

<div>

This is a div with <span class="red">red text</span>.

</div>

<div>

This is another div with <span class="blue">blue text</span>.

</div>

</body>

</html>

If you did it all correctly, then you should end up with something like this, when opened in a web browser.

What are all the CSS properties?

CSS can be used to style just about any aspect of an HTML element. With CSS you can create shadows, animations, and nearly anything else you see on the web. In my posts I'm going to focus on the most common CSS properties, but if you want to see the complete list of things that can be styled with CSS, then check out MDN's comprehensive list of CSS properties.

Summary

- CSS is the style layer of the web.

- CSS is usually imported into an HTML file from a separate CSS file.

- The element, class, and id selectors allow you to target your styles to specific elements.

More

CSS is quite large and complex, but you don't have to know everything about it to use it effectively. You only need to know the fundamentals, and be able to use web search to find the other stuff.

Project: Personal web page

This post contains the first assignment in my free web programming course.

Project: Personal web page

Now that you know a little html and css, you should be able to produce some simple web pages. In this assignment, I want you to create a web page about yourself. It should be pretty similar in content to my about-me page on my website, but it should be about you and the things you like.

Here's what it must contain.

- A title that says your name and is styled like a title.

- A picture of you or something you like.

- A short paragraph of at least three sentences. You can use Lorem Ipsum if you can't write three sentences about yourself.

- A quote you like that is styled like a quotation. Usually quotes are indented more and displayed in italics.

- At least three links to things you've done or things you like. Use html lists to show these.

Introduction to HTML

In this post, I'm going to introduce HTML, and demonstrate how it acts as the skeleton for all pages on the internet.

TLDR: HTML defines the structure of the page

HTML is used to define the structure of a web page. It's the structure that your styles (css), and code (JavaScript) sits on top of. The CSS styles the html elements. The code allows interaction with html elements.

What is HTML?

HTML stands for Hyper Text Markup Language. The unusual phrase "hyper text" means "more than text". Hyper text can be contrasted to plain text. You read plain text in a printed book. The words string together to form a story. But what if you want to know more about a topic? What if you want a definition for a term? What if you want to email the author? HTML gives web programmers the ability to "mark up" the text in a way that allows web browsers to add additional functionality to a text document.

HTML is one of the key inventions that enabled the web to become so popular. The ability to add cross-references directly in a document enables all sorts of connections and interactions that were formerly impossible. Nearly every page on the internet is made using HTML.

What are HTML elements?

The classic example of a hyper text element is a link. When links were invented, they were called "anchors", as if the author is anchoring a bit of text to another document. When you want to add a link to an html document you use the anchor or "a" element. Here's an example.

<a href='https://www.myblog.com'>My blog</a>

Wow, that's pretty confusing, isn't it? But take a moment to look more closely. What parts of it do you see?

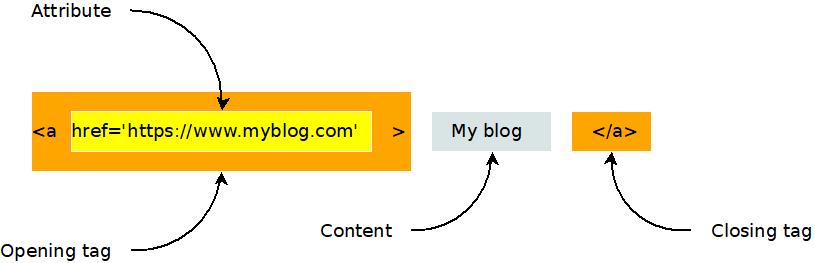

An html element can have an opening tag, any number of attributes, content, and a closing tag, as shown in this diagram.

Here's another example of an html element. It's the image, or "i" element.

<img src="https://www.evanxmerz.com/images/AnchorTag_01.jpg" alt="Anchor tag diagram"/>

Notice that the image element shown here doesn't have a closing tag. This is the shortened form of an html element that doesn't need any content. It ends with "/>" and omits the content and closing tag.

The structure of an html document

HTML documents are composed of numerous html elements in a text file. The HTML elements are used to lay out the page in a way that the web browser can understand.

<!DOCTYPE html>

This must be the first line in every modern html document. It tells web browsers that the document is an html document.

<HTML>

The html element tells the browser where the HTML begins and ends.

<HEAD>

The head element tells the browser about what other resources are needed by this document. This includes styles, javascript files, fonts, and more.

<BODY>

The body element contains the content that will actually be shown to a user. This is where you put the stuff that you want to show to the user.

An example html document

Here's an example html document that contains all of the basic elements that are required to lay out a simple HTML document.

I want you to do something strange with this example. I want you to duplicate it by creating an html document on your computer and typing in the text in this example. Don't copy-paste it. When you copy-paste text, you are only learning how to copy-paste. That won't help you learn to be a programmer. Type this example into a new file. This helps you remember the code and gets you accustomed to actually being a programmer.

What about typos though? Wouldn't it be easier and faster to copy-paste? Yes, it would be easier and faster to copy-paste, however, typos are a natural part of the programming experience. Learning the patience required to hunt down typos is part of the journey.

Here are step-by-step instructions for how to do this exercise on a Windows computer.

- Open Windows Explorer

- Navigate to a folder for your html exercises

- Right click within the folder.

- Hover on "New"

- Click "Text Document"

- Rename the file to "hello_world.html"

- Right click on the new file.

- Hover over "Open With"

- Click Visual Studio Code. You must have installed Visual Studio Code to do this.

Now type the following text into the document.

<!DOCTYPE html>

<html>

<head>

<title>Hello, World in HTML</title>

</head>

<body>

Hello, World!

</body>

</html>

Congratulations, you just wrote your first web page!

Save it and double click it in Windows Explorer. It should open in Google Chrome, and look something like this.

There are many HTML elements

There are all sorts of different html elements. There's the "p" element for holding a paragraph of text, the "br" element for making line breaks, and the "style" element for incorporating inline css styles. Later in this text we will study some additional elements. For making basic web pages, we're going to focus on two elements: "div" and "span".

If you want to dig deeper into some other useful HTML elements, see my post on other useful html elements.

What is a div? What is a span?

That first example is pretty artificial. The body of the page only contains the text "Hello, World!". In a typical web page, there would be additional html elements in the body that structure the content of the page. This is where the div and span elements come in handy!

In the "div" element, the "div" stands for Content Division. Each div element should contain something on the page that should be separated from other things on the page. So if you had a menu on the page, you might put that in a div, then you might put the blog post content into a separate div.

The key thing to remember about the "div" element is that it is displayed in block style by default. This means that each div element creates a separate block of content from the others, typically by using a line break.

<div>This is some page content.</div>

<div>This is a menu or something.</div>

The "span" element differs from the div element in that it doesn't create a new section. The span element can be used to wrap elements that should be next to each other.

<span>This element</span> should be on the same line as <span>this element</span>.

An example will make this more clear.

Another example html document

Again, I want you to type this into a new html file on your computer. Call the file "divs_and_spans.html". Copy-pasting it won't help you learn.

<!DOCTYPE html>

<html>

<head>

<title>Divs and spans</title>

</head>

<body>

<div>

This is a div and <span>this is a span</span>.

</div>

<div>

This is another div and <span>this is another span</span>.

</div>

</body>

</html>

Here's what your page should look like.

It's not very exciting, is it? But most modern webpages are built using primarily the elements I've introduced to you on this page. The power of html isn't so much in the expressivity of the elements, but in how they can be combined in unique, interesting, and hierarchical ways to create new structures.

Summary

In this section I introduced Hyper Text Markup Language, aka HTML. Here are the key points to remember.

- HTML is the skeleton of a web page.

- HTML elements usually have opening and closing tags, as well as attributes.

- HTML documents use the head and body elements to structure the page.

- Div and span elements can be used to structure the body of your webpage.

More

How to set up a computer for web development

In this post, I'm going to suggest a basic set of tools required for web development. These instructions are aimed at people who are using a desktop computer for web development.

TLDR: Install Google Chrome, Visual Studio Code, and npm

Here are links to the three tools that I think are vital to web development.

What tools are needed for web development?

A programmer of any sort needs two basic tools:

- A tool to write code.

- A to to run the code they have written.

Code is usually represented as text, so to write code you will need a text editor. Today most programmers use a text editor that has been adapted for the task of writing code called an Integrated Development Environment, or IDE. The IDE that most programmers prefer in 2023 is Visual Studio Code. It's developed by Microsoft, who has a long history of creating high quality products for developers.

Traditionally, code is compiled and run by a computer's operating system, however, many modern systems take code written by a developer, then run it live using a tool called an interpreter. In this series, we're going to be writing JavaScript code, then running it in the interpreter in Google Chrome or in Node.

So those are the three tools we need to install to setup a basic environment for writing code for the web:

Node also includes a tool that is fundamental to web development called Node Package Manager, or NPM for short. NPM is a tool for downloading chunks of code written by other programmers, or packages, and using them in your own app.

More

Here are some other resources and perspectives on setting up your computer for web programming.